Challenges of Building Out A Live Digital Twin

Digital twin technology has developed over time, but what do organizations need to ensure real-time metrics?

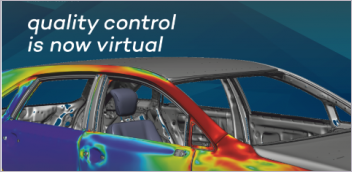

GridRaster claims millimeter accuracy in its mixed reality displays for manufacturing. Image courtesy of GridRaster.

Latest News

November 11, 2022

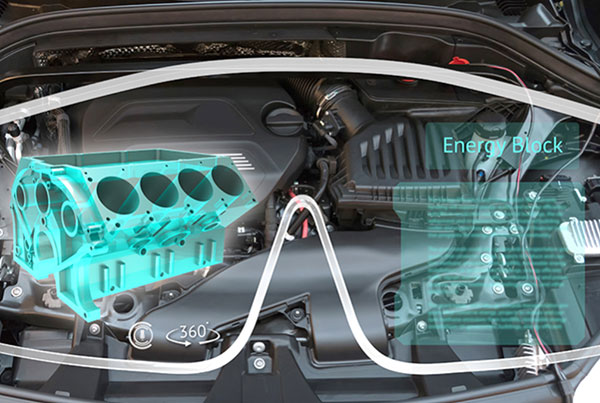

A live digital twin design of the German rail network is in the works by a division of German rail giant Deutsche Bahn. Digitale Schiene Deutschland (DSD) is using various data sources to create the required models, including Siemens JT CAD and simulation data of all rail cars and engines, and building information modeling data of all the stations and rail lines.

Video cameras along 33,000 km of tracks will monitor the network’s real-time status, while artificial intelligence (AI) apps analyze the video feed for anomalies—such as debris on the tracks—in real time. Existing logistics data will be part of the system as well, so live digital twin users could see where any train is at any given time.

When run as anticipated, this new network will reflect real-time status of the rail network and provide data for continuous improvement of vehicles and infrastructure. The same data will train the complex AI systems to monitor the system.

DSD says the live digital twin will help Deutsche Bahn maximize transport efficiency and capacity to delay building new infrastructure. As explained by technology partner NVIDIA, the DSD network will come together based on four principles:

- Single source of truth: All datasets come from engineering, logistics and infrastructure.

- Physically accurate: Models are verified by simulation (as designed) and manufacturing (as built)

- Synchronized to reality: Real-time data is continuously fed into the system and used to keep the virtual current with the physical.

- Optimized by AI: Such a vast network requires semiautonomous AI agents watching every track and train.

Cyberspace, Metaverse or Live Digital Twin?

If a certain software company whose most famous product rhymes with “racehook” gets its way, we will be calling Deutsche Bahn’s live digital twin an example of life in the Metaverse. Most commercial discussions of this metaverse today are focused on consumer behavior and social “experiences” augmented by 3D viewing headgear.

“Metaverse” is the most recent name trotted out to identify the intersection of human, internet and visual interaction in a 3D computer-mediated virtual space. William Gibson coined the word “cyberspace” in 1982 to define a 24/7 interactive computer-mediated environment that was more about escapism than productivity.

Digital twin is a much more recent name; Dr. Michael Grieves is widely credited as coining the phrase and defining the concept of having a digital perfect match of a manufactured thing. A “live” digital twin has long been inferred but not articulated until recently, first by companies in the Digital Cities space. Now the phrase is being picked up by manufacturing companies and their partners.

Plumbing and Wiring for Live Digital Twin

The rise of model-based design makes scenarios such as the Deutsche Bahn live digital twin possible. The assets have been available for years, and can now be repurposed. The number of software companies moving to make this vision a reality is growing. It is a new infrastructure that requires new investments in software and hardware, the digital equivalent of cement, steel, plumbing and wiring. A live digital twin can’t run from unused hard drive space on one engineer’s workstation.

NVIDIA is pushing its Omniverse platform as a key foundational software resource for the industrial metaverse or live digital twin. The rail network digital twin described above is being created using Omniverse. It is an extensible visualization platform based on Universal Scene Description (USD), originally created by Pixar for sharing and reusing digital assets for media and entertainment. USD is now an open source protocol supported by the largest players in this space, including Unreal Engine and Unity.

Another software infrastructure player in this space is GridRaster, which prefers to call this emerging technology mixed reality (MR).

“Enterprise-grade high-quality MR platforms require both performance and scale,” says Dijam Panigrahi, co-founder and chief operating officer of GridRaster. “Organizations that deploy these gain a rich repository of existing complex 3D CAD/CAM models created over time.”

Panigrahi cautions about reusing existing assets without a clear goal in mind for their new purpose. Adopting an infrastructure big enough to hold and deliver live digital twins requires investment.

“The organizations that take a leadership role will be the ones that not only leverage these technologies, but they will partner with the right technology provider to help scale appropriately without having to stunt technological growth. Leveraging cloud-based or remote-server based MR platforms powered by distributed cloud architecture and 3D vision-based AI,” will be key, he adds.

In early 2022, GridRaster surveyed more than 3,000 business and manufacturing leaders. The results included insight into using metaverse technology—by any name—in manufacturing:

- More than one-third of manufacturers said 20% of their operations used some form of AR/VR in 2021.

- More than one-half of respondents said close to 50% of their operations would use some form of AR/VR in 2022.

- Manufacturing executives and business leaders feel they will get the most out of the metaverse through AI and software (57%), marketing automation (48%), workflow/business process management (39%), AR/VR (27%), project collaboration (23%) and robotics (17%).

The live digital twin Deutsche Bahn is now building will include ongoing simulation to detect potential part failure. Image courtesy of NVIDIA.

Mixed Reality in Use

Valiant TMS is an intelligent automation specialist in manufacturing. From its base in Windsor, Ontario, Canada, it operates 24 facilities in 13 countries, serving companies primarily in automotive, aerospace and heavy industry.

The company was an early user of sharing 3D data via Microsoft HoloLens technology.

“As a trusted automation partner, our customers expect us to be leaders,” says Suresh Rama, director of business intelligence and innovation at Valiant. Noting how rapidly consumer tech was moving toward immersive display, the company began studying how to take collaborative design review to another level. “We knew we were on the brink of a major shift in how the industrial sector uses technology as we were already seeing it infiltrate this space in small ways.”

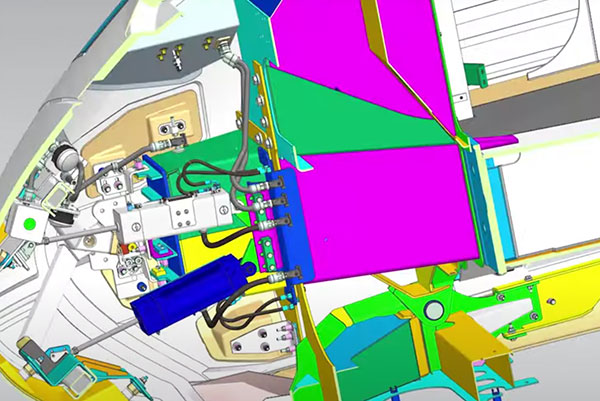

The company noticed there seemed to be two potential paths for implementing mixed reality into manufacturing. The first is visualization of existing 3D content as holographic models, something they were already providing with HoloLens headsets and display technology from Theorem Solutions, a leader in CAD data conversion technology.

The second path was to add information transfer, so users could quickly acquire knowledge and then validate design by comparing the virtual and the physical.

“We also needed a way to perform incoming material inspection,” says Raymond Slowik, senior R&D engineer at Valiant. “We felt our customers could benefit from an immersive review of our design proposals to ensure that our equipment will comply with their requirements, specifications and expectations.”

Working with Theorem, Valiant created a new robotic cell for windshield installation for a client. Prior to using the MR solution, the company would perform a laser scan of the area, which requires stopping the line for several hours. Using the MR system, inspection occurred on a regular workday. Engineers were able to attend the on-site review and visualize the tool changes in real time from multiple angles. Valiant estimates their client saved two days waiting for scanning and processing of the resulting model. The MR data was immediately available, and work continued without needing a weekend or off-shift line stoppage.

Four Drivers of Complexity

Regarding computational resources, these new metaverse—or MR or cyberspace—environments are voracious consumers. NVIDIA tells its partners to divide up the computational needs into four categories, which it calls the four pillars of MR.

The first pillar is photorealism.

“Knowledge transfer requires photorealism,” says Greg Jones, NVIDIA’s director of global business development for mixed reality strategy. Photorealism requires the newer generations of graphics processing units to achieve photorealism and real-time delivery.

The second pillar is collaboration, which extends beyond people to include AI apps. Much of the work to create and maintain a live digital twin will be done by AI agents, which will learn and report as new data is created.

The third pillar is AI agents. “You need enough compute resources for AI agents to be successful,” says Jones.

The fourth pillar is streaming, which requires the computation power to deliver real-time graphics and the bandwidth between devices and systems to minimize latency.

All this takes multiple physical installations, “more than one [graphics processing unit], data center or cloud,” says Jones. The multiverse for industrial application will have computation taking place inside it, not externally and then delivered.

“Even if we infer the simulation, [high-performance computing] will be needed to build training sets” for AI, Jones notes. Working in a virtual factory with AI helping, there will be a mix of flat screen, headgear and computer vision creating and consuming the virtual scenes.

No one we talked to suggested any of the digital hardware required to run all this is not currently available. It is all about deployment of existing capabilities in new ways that extend the utility. Computing will take place in data centers—on-premises and cloud—and often on the edge. 5G and other forms of high-speed wired and wireless data transmission will be required.

In regular use, Jones says this real-time digital twin “will run the simulation, provide views from computer vision and serve up contextual feedback. When a physical asset is built from it, the [virtual version] will maintain it, with the AI watching the real plant and comparing it to the virtual one.” D

More Theorem Solutions Coverage

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Randall S. Newton is principal analyst at Consilia Vektor, covering engineering technology. He has been part of the computer graphics industry in a variety of roles since 1985.

Follow DE