View or stream online

Is Your Car a Good Listener? Duration

9:11 hrs/min/sec

Subscribe today

Latest podcast episodes

- Jon Peddie on Milestone Moments in the History of CAD

- Leadership Profile: Marco Turchetto of ESTECO on How Automation can Improve Simulation Workflows

- Podcast Series: Engineer Innovation

- 2023 State of Design & Make Report Reveals Skilled Labor Shortage and Sustainability Concerns

- More podcast episodes

Latest News

September 15, 2020

Human drivers are trained to rely on “visibles” and “audibles” to make split-second decisions. An emergency vehicle’s siren, unusual mechanical noises from the engine and the loud bells from railroad crossings are just a few of the auditory alerts that might prompt a sudden change in driving strategy. So, when the human driver is removed from much of the decision-making process in autonomous vehicles, who is listening?

Your car is, as it turns out. There’s a specific discipline that studies the noise, vibration and harshness (NVH) of the vehicle. In classic automotive design, the noise-refinement goal is primarily to reduce the level of undesirable noises in the car’s interior. If your engine noise is too loud, you won’t be able to enjoy the latest Taylor Swift single streaming from your smartphone, for example. But with the emergence of self-driving cars, the discipline takes on new burdens: one is teaching the car to listen.

Listen to the recorded podcast with the speakers quoted below:

A Multiphysics Approach

Mads Jensen, technical product manager for Acoustics at COMSOL, is someone who listens to your future car’s hums and whispers. He points out the reversal of acoustic engineering—the shift from noise cancellation to noise amplification—with the emergence of electric vehicles.

“The autonomous car itself has to react to the environment. ... That means the microphones in the car have to be placed properly to hear the environment better.”

“In different countries and regions, the car is required to make a certain level of sound so the pedestrians can hear it approaching. So, with electric cars [which do not have a noisy combustion engine], you now have to actually emit sound with loudspeakers to meet that requirement,” explains Jensen.

Modeling the outbound acoustics of the car, such as sound emitted from the car’s speakers, can be done with the Acoustic Module in the COMSOL Multiphysics software suite. “Acoustics can be coupled with other physical effects, including structural mechanics, piezoelectricity [accumulated electric charge] and fluid flow,” according to COMSOL’s product details page.

“A lot of the car makers are modeling the acoustics inside the car as well. It’s a relatively small acoustic space, but a complex environment. You have people and objects, so you have to use multiple speakers to create sound zones inside the space,” Jensen says.

Imagine a fully autonomous van in which the driver has the option to turn around for chitchat with the passengers sitting in the back. The optimal speaker placements for such a vehicle are significantly different from a typical family van where everyone is facing the front for the most part.

“To figure out the optimal configuration of speakers and sound zones for such a space, you really need to rely on simulation,” adds Jensen.

By itself, sound propagation is not multiphysics; however, the mixture of electromagnetics, structural vibration and sound fields to account for the behavior of the speakers makes the simulation multiphysics—a specialty of COMSOL’s simulation technology, Jensen points out.

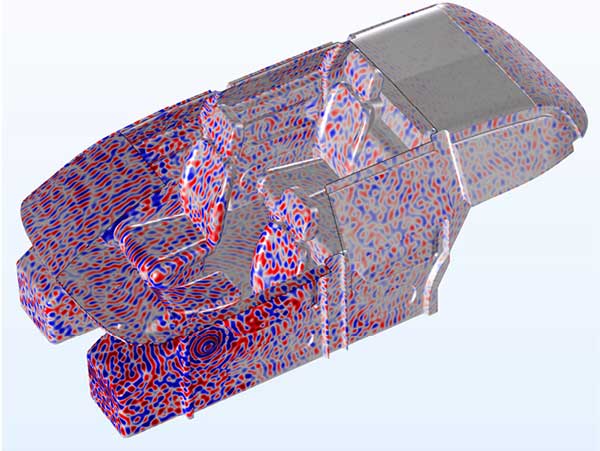

Though electric cars do not have a combustion engine, they do have an electric motor, which generates certain noise and vibration. “These sounds can propagate through the vehicle’s body to create unwanted sound inside the cabin,” Jensen says. “When you have sound fields interacting with the car’s structure, it’s a multiphysics problem.”

Raytracing for Sound Simulation

Engineers traditionally use finite element analysis (FEA) methods to model the wave fields inside the vehicle and solve simulation in the frequency domain or time domain, Jensen explains.

“With this approach, you need a lot of discrete points or mesh elements to resolve the wavelengths, so it’s computationally expensive … With the latest development in COMSOL software, you can now run the full wave approach to a much higher frequency. Both computational methods and computational power are improving,” says Jensen.

An improvement in the software is the use of time-explicit discontinuous Galerkin method. “This allows you to model wave propagation in the time domain,” says Jensen. “This approach is extremely suitable for parallel computing.”

The latest strategy is to employ a hybrid approach—a mix of full wave simulation and raytracing. “With raytracing, you can think of soundwaves as particles traveling like rays. When it hits a surface, it bounces back, but some energy is also absorbed by the surface, depending on the surface’s porous or smooth texture,” says Jensen. “That’s an inexpensive way to model acoustics inside a confined space.”

An important step in the acoustic simulation is the treatment of the CAD geometry, which defines the space in which soundwaves propagate.

“The better you can prepare your CAD geometry for meshing and simulation, the more accurate your simulation is,” says Jensen.

Too Quiet for Safety

Ales Alajbegovic, vice president of worldwide industry process success & services at Dassault Systèmes’ SIMULIA, recalled a cab ride to the Munich International Airport. The cab driver was complaining about the loud wind noise, but the wind was not particularly strong that day.

“When you remove the noise of the combustion engine, the noise inside the cabin is reduced significantly. But as a result, you start to notice other noises, like wind noises, and much more,” he observes. “In many cases, they may be even more bothersome than a car with a combustion engine.”

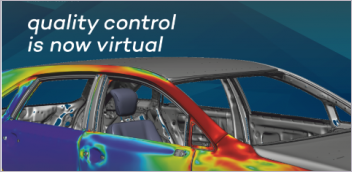

Wind noise simulation showing turbulence and the resulting acoustic waves on glass panels. Image courtesy of Dassault Systèmes.

Wind noise comes from aerodynamics, or the friction between the airflow around the car and the car’s chassis itself. Therefore, “the shape of the car is crucial to what you hear in the cabin,” says Alajbegovic. One way to minimize it is to reduce drag by modifying the shape of the chassis—the geometry of the car.

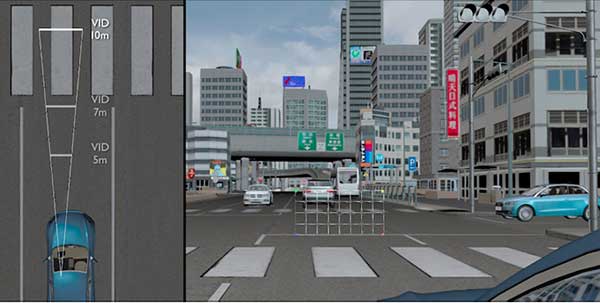

“The autonomous car itself has to react to the environment. So, in addition to seeing [using real-time video feed and computer vision], it also has to listen to what’s happening around it. That means the microphones in the car have to be placed properly to hear the environment better,” says Siva Senthooran, director of technical sales at SIMULIA Centers of Excellence.

Dassault Systèmes offers several tools for aerodynamic simulation and geometry modeling in its 3DEXPERIENCE product line. PowerFLOW software, based on transient Lattice Boltzmann-based physics, includes features for thermal, aerodynamic and aeroacoustic simulation. The company also offers Wave6 software for vibro-acoustic and aero-vibro-acoustic simulation; and Abacus, a structural analysis suite.

Can You Hear Your Car?

In May 2018, Ansys acquired OPTIS, an optical simulation software developer. Subsequently, Ansys also acquired GENESIS, an acoustic simulation software developer. The new acquisition enables Ansys software to generate not only visual representation of sound fields but also the audio—in theory, what you would hear inside the vehicle.

“The electric vehicles are quiet—maybe too quiet. So secondary noises like hatchback noise and duct effects become clearly audible. Also, the electric motor has a fast rotation, which creates a whining sound,” says Patrick Boussard, senior manager, product management at Ansys. Boussard is also the founder of GENESIS before its acquisition.

“Because the car is too quiet, any squeak or creak from the panels or misalignment in the windows gets noticed. There’s nothing to drown out these sounds,” says Siddharth Shah, principal product manager, Ansys.

Acoustic analysis gets more complex in combining autonomous driving and the quieter electric powertrains. With the need to focus less, humans in the autonomous vehicles are more likely to listen to music, watch a movie, engage in conversation or even take a nap. That means the vehicle needs to create a variety of sounds to alert the passengers to lane changes, emergency stops and the need for someone to assume manual control.

Using Ansys VRXPERIENCE Sound, users can virtually experience the cabin of a car still in development, visually and auditorily.

“It adds signal processing, so you can hear all the sounds from different audio sources in a surround environment,” explains Boussard.

“What you can do with VRXPERIENCE is to assess the sound,” says Shah. “With it, you can tell whether you increase the sound by a certain decibel if you make changes to the mirror, the vent or the profile of the car, for example.”

In autonomous cars, the in-cabin audio experience takes on new importance as drivers and passengers may demand a better environment for entertainment (movies, music and conversation, for example). But the car must now also learn to listen and react to the sound signals the drivers may not be actively listening to.

More Ansys Coverage

More COMSOL Coverage

More Dassault Systemes Coverage

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Kenneth Wong is Digital Engineering’s resident blogger and senior editor. Email him at kennethwong@digitaleng.news or share your thoughts on this article at digitaleng.news/facebook.

Follow DE